Overcoming chaos on the way to the Moon

Lesson XVI from the Lunar Landings

For Moon landing aficionados, December is the month of both the first and the last Apollo Moon missions. Exactly 49 years ago, Apollo 17 closed the first chapter of human exploration of the Moon when they launched on December 7th, 1972. This was a short chapter, which opened 4 years earlier when the first humans left the immediate area of the Earth and ventured forth into the cosmos — the voyage of Apollo 8 to the Moon. Apollo 8 launched on the 21st of December, 1968, and achieved lunar orbit on the 24th.

Later that day, on Christmas Eve, the astronauts broadcast a short televised message to the three and a half billion people whom they had left back on Earth, 385,000 km (250,000 miles) away. After discussing the mission and their impressions, they sent a more spiritual message — the three astronauts read the story of creation from Genesis, chapter 1, verse 1.

In the beginning God created the heaven and the earth.

And the earth was without form, and void; and darkness was upon the face of the deep.

And the Spirit of God moved upon the face of the waters. And God said, Let there be light: and there was light.

And God saw the light, that it was good: and God divided the light from the darkness.

In the Bible’s original Hebrew, the phrase without form, and void is תֹהוּ וָבֹהוּ (pronounced Tohu Va’vohu) which is translated from modern Hebrew into modern English as Chaos.

While the astronauts, scientists, and engineers would have rejected the mere thought that their endeavors succeeded as a result of chaotic happenstance or random luck, they would have eagerly embraced a modern reliability engineering discipline called Chaos Engineering. In retrospect, many of their processes were predecessors of modern chaos engineering.

Chaos engineering has been defined as

Chaos Engineering is the discipline of experimenting on a system in order to build confidence in the system’s capability to withstand turbulent conditions in production.

— https://principlesofchaos.org/

In other words, chaos engineering is a practice whereupon you test the resilience and reliability of a system by shutting down or otherwise temporarily damaging parts of it to make sure that the system can continue working in this damaged condition (virtually damage it, of course — you don’t attack a server with an axe to see what happens!).

Unlike in traditional testing, there is usually an element of randomness to each chaos “experiment” so that each time you run a chaos experiment it will be different.

Unlike in traditional testing, you also perform controlled chaos experiments in the production environment so that you have confidence that it will survive damage in uncontrolled situations too.

To those unfamiliar with the tenets of chaos engineering, it is a seemingly dangerous practice where one “randomly shuts down parts of the environment in production and sees what happens”. In reality, however, chaos engineering is a rigorous engineering method where you define experiments ahead of time and perfect them in development environments well before you attempt them in production.

Chaos experiments cover every aspect of an application — from the hardware (disconnect a server from the network and see what happens), through software (restarting a Kubernetes pod is probably the most popular chaos experiment) all the way through human processes (game days are large scale chaos experiments where you also find out how humans react to failures).

Now, restarting a service or a Kubernetes pod is a very cheap kind of experiment; it takes seconds and doesn’t cost anything. Experiments of this type were impossible during the race to the Moon because inducing a failure in a rocket or spacecraft would have cost all the time and money needed to produce them — in reality, not the virtual world.

So instead of executing chaos experiments in real missions, NASA did the next best thing — simulations.

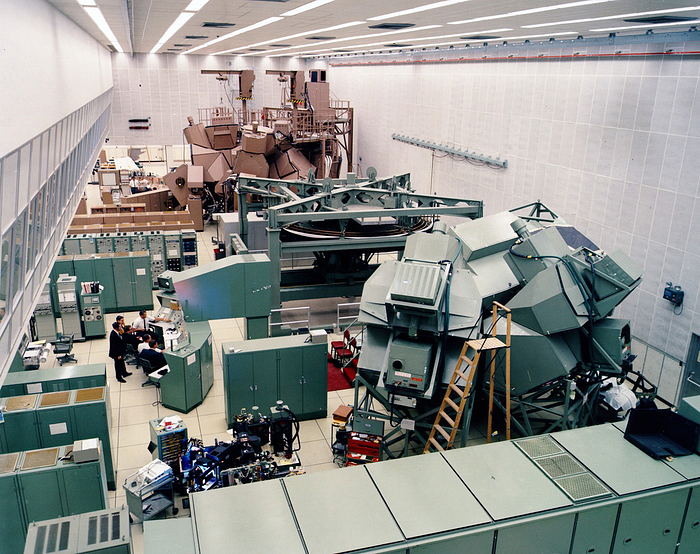

Simulations were practice missions where the astronauts practiced flying missions in space and the engineers on the ground (flight controllers) practiced supporting them. Real flights were preceded by months upon months of simulated flights where the astronauts “flew to the Moon” while remaining in buildings in Houston and Cape Canaveral (there were two sets of simulators). While they flew, the IBM computers in the Real Time Computing Center (RTCC) fed the simulated spacecraft and the mission control console's data which simulated what they would be seeing on the way to the Moon.

But practicing a flight where everything goes well is easy; the simulation supervisors (SimSups) would inject failures into the procedures which the astronauts and engineers would need to solve or the mission would end in a simulated abort or even worse — a simulated loss of crew.

Each time the SimSups came up with a new failure mode, the flight controllers would come up with a new procedure (or run book) that would resolve the problem and allow the mission to continue.

Each time the SimSups obfuscated and confused the flight controllers with myriad failures, they would improve their monitoring and telemetry processes so they would be able to detect the problem faster and more efficiently.

By the time the astronauts and flight controllers were scheduled to fly, they had been subjected to hundreds of chaos experiments; Some they survived, some they failed — and learned from the failure.

One of the very last simulations for Apollo 11 ended up in a failed mission because the flight controller in charge of the spacecraft’s computer didn’t respond correctly to an error code of 1201. But, as described in the first article of the series, by the time of the real mission the flight procedures had been updated to account for the results of the simulation.

Just about every possible contingency was defined as a simulation experiment and tested by the flight controllers. There were of course some extreme cases where you just had to say “this is an unsurvivable scenario, we can’t do anything about this”. For example, if the spacecraft was struck by a large enough random piece of rock while rushing through space, it would be destroyed. The only way to avoid this was either by having two spacecraft capable of fulfilling the entire mission from launch to landing or by armor-plating the spacecraft. Both options were impossible from an engineering and cost perspective. The existing shielding of the spacecraft meant that it could survive most potential meteor strikes, but not an unusually large one. So “hit by a meteor” was simply a known risk the astronauts would have to take.

Another scenario for which there was no experiment or response was “what if both titanium-plated liquid oxygen tanks are destroyed?”. As a matter of fact, there was no scenario imagined where the spacecraft’s redundant oxygen tanks would both be destroyed — and the astronauts would survive whatever destroyed them anyway! So, macabre as it sounds, no plan was devised for a scenario that was not survivable.

Of course, this was the exact Apollo 13 scenario, as described in a previous article!

Similarly, until recently, few Disaster Recovery Plans (DRP) were designed to survive the failure of two or three geographically remote data centers. It’s only in the modern era of hybrid multi-cloud deployments that architects consider such designs as a standard solution.

But the great benefit of performing simulations (or chaos experiments, as we’d call them today) is that you gain tremendous confidence in the capabilities of your system under stress. When a new problem occurs, you will know how the rest of the system performed in similar-but-not-identical conditions and use this knowledge to help you.

I’ll be discussing the Apollo 13 mission and many of the lessons to be learned from it by modern Site Reliability Engineers at SRECon 20 Americas on December 08, 2020, at 2:55 pm EST — I’d be delighted to see you there. The full agenda is available here. There are many fantastic sessions to attend.

In the meantime, for those of you developing applications here on Earth and not planning to fly to the Moon for Christmas, here are a few of IBM’s ideas on Chaos Engineering:

I’ll try to get another Apollo 8 themed lesson in before Christmas and the New Year, but in January I will begin discussing lessons relevant to the 35th anniversary of the Challenger disaster.

A short 5-minute session of mine on the lessons from Challenger has been accepted to the upcoming TLV Dev Community conference on the 17th of December. Registration is free and there’s a great lineup of speakers.

You can follow the mission of Apollo 17 in real-time, 49 years later, at https://apolloinrealtime.org/17/

Previous articles in this series:

For future lessons and articles, follow me here as Robert Barron, or as @flyingbarron on Twitter and Linkedin.

Learn more at www.ibm.com/garage