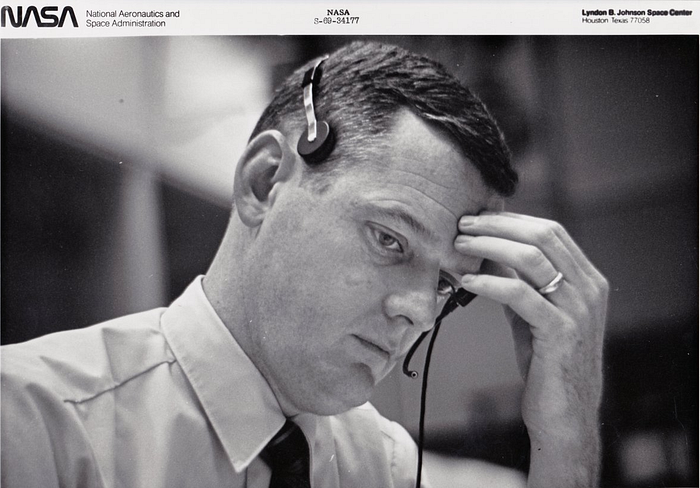

Glynn Lunney — SRE Leadership

Glynn Lunney “Black Flight”, 1936–2021

Glynn Lunney, one of the most senior engineers of the Apollo project passed away on Friday, 20th of March 2021. Lunney was a leader and an inspiration to reliability engineers for over 50 years.

Glynn Lunney, call sign “Black Flight”, was one of the first of NASA’s flight directors, the engineers who orchestrated the space flights. While the astronauts flew, the flight directors had the responsibility for the overall success of the mission — overseeing everything from the moment the rocket lifted off until the astronauts were recovered by the navy. In modern IT parlance, they were Incident Commanders managing the most spectacular deployments of all time!

From their central command console in the Mission Operations Control Room (in Houston) they oversaw the casts of thousands supporting each flight and had final say on any operational decisions needed to ensure the success of the mission.

Lunney joined the Space Task Group, the department in NASA responsible for launching the first human into space, at the age of 21, the youngest of the original 45 members. When the department was formed in 1958, manned space flight was still science fiction. As he said “A lot of the senior engineers thought that the project was crazy, and they were knowledgeable enough in the ways of the world…[I] was not knowledgeable in the ways of the world and said ‘Gee, that looks like it would be a hell of a lot of fun to me — let’s go do that!’” (Apollo, p.g. 19)

He describes the thinking and consideration that went into the mission planning in this way:

“We began, early on, to conceive of the idea of mission rules; that is: ‘What would we do in these circumstances?’ A whole bunch of our world was driven by ‘what if?’ What if this? What if that? What if this? And this was this chess game we played with Mother Nature, I guess, and hardware as to: What are we going to do if this happens? What are we going to do if that happens?

And gradually we evolved both a way to instrument the spacecraft so that, when we were trying to detect something, that we had various ways of knowing that we indeed were really defining the problem; and then we had to develop this code of ethics about how far were we willing to go in continuing the mission in the face of various kinds of failures?

… But we gradually began to build first off a kind of a philosophy of risk versus gain. [Is] the risk we’re taking appropriate to the gain that we are getting out of any decision that we made?

And then we began to build a framework below that for how much redundancy we wanted to have remaining in order to continue. So that if we lost certain kinds of systems or capabilities, we developed an attitude about how much redundancy we wanted to have to remain in order to continue the whole mission. And if we passed over the threshold of having enough redundancy that we wanted to have to continue, then we were into one of these no-go conditions where, okay, we’re going to start looking for the closest place to come home.”

— Glynn Lunney (NASA Johnson Space Center Oral History Project)

Half a century after the moon landings, Lunney’s words are still relevant to Site Reliability Engineers, not only those responsible for launching rockets, but for deployment in any technological endeavour.

- Mission Rules: The goals of the deployment (the Service Level Agreements we’re trying to achieve), the automated deployment pipelines, and the runbooks which document how to go about resolving issues that occur.

- What If: Today we’d call it Chaos Engineering and Game Days. NASA called it simulations (before the flights) and used the IBM computers in the Real-Time Computer Center (RTCC) to run virtual experiments during the flights, responding to real world changes.

- Instrument the spacecraft: Observability & monitoring are key to understanding how a spacecraft, a software monolith, or thousands of microservices behave. Yes, in the sixties adding a new sensor would involve welding whilst today it’s simply adding a line of code or modifying a YAML file, but the principles are the same.

- Risk versus Gain: The eternal balancing act between velocity and safety. This can often be calculated as “error budgets” — how many more changes and deployments can you squeeze out in the near future without risking a failure.

- Redundancy & Reliability: Needless to say, if any single thing can go wrong and will ruin the mission or cause trouble for end users, then it needs to be made redundant.

- No-go conditions: Every now and then things do really get out of hand during a mission (or an incident) and there’s no choice but to abort the mission, roll back the deployment or apply an emergency fix.

Lunney started out as a Flight Dynamics Officer (FIDO), the engineer tasked with management & control of the flight path of the space vehicle — the primary functional requirement of any mission is making sure the vehicle reaches its target! After moving up to managing the entire FIDO team, Lunney later advanced to position of Flight Director — managing & orchestrating everything that occurs during a flight, balancing the demands of success and safety, including making split second decisions during emergencies with the lives of the astronauts in the balance.

Lunney was not simply another one of the engineers in NASA, he was one of the leaders and role models. He had a central part in taking control back of Apollo 13 after the mid-flight explosion. Astronaut Ken Mattingly, originally designated Apollo 13 pilot before being replaced at the last minute, said of him:

If there was a hero, Glynn Lunney was, by himself, a hero, because when he walked in the room [after the explosion], I guarantee you, nobody knew what the hell was going on. Glynn walked in, took over this mess, and he just brought calm to the situation. I’ve never seen such an extraordinary example of leadership in my entire career. Absolutely magnificent. No general or admiral in wartime could ever be more magnificent than Glynn was that night. He and he alone brought all of the scared people together. And you’ve got to remember that the flight controllers in those days were — they were kids in their thirties. They were good, but very few of them had ever run into these kinds of choices in life, and they weren’t used to that. All of a sudden, their confidence had been shaken. They were faced with things that they didn’t understand, and Glynn walked in there, and he just kind of took charge. (Go, Flight!, pg 221)

For dramatic purposes (compressing time and limiting characters) many of his actions were combined with those of Gene Kranz (as played by Ed Harris) in the 1995 movie Apollo 13. Glynn’s character appears, but as a minor character, instead of in the central role he had in reality.

While he may not have been well known to the public, he was highly respected by his peers and considered to have “the quickest mind in a business where quickness was a supreme virtue” (Apollo, p.g. 277) & later in life was recognized with the National Space Trophy.

I’d like to close with Glynn Lunney’s own words on the Apollo program and one of the reasons it inspires many to this very day:

“…to me, it was a somewhat sacred and noble enterprise. I had the sense that we were doing something for the human species that had never, ever been done before”

(Go, Flight, pg. 318)

For further reading

- Official link: NASA Remembers Legendary Flight Director Glynn Lunney

- You can relive Lunney and his Black team start their shift saving Apollo 13 here in the Apollo in Real Time website

- The BBC have a fantastic podcast covering Apollo 13 and Lunney’s actions.

Articles in this series:

For future lessons and articles, follow me here as Robert Barron, or as @flyingbarron on Twitter and Linkedin.

- Go, Flight. R. Houston and M. Heflin, 2015.

- Apollo. C. Murray & C. B. Cox, 2004.

- NASA Johnson Space Center Oral History Project .